Have you ever thought about how businesses retrieve information from the internet, inundated with huge amounts of data? The answer is list crawling. It is a method also known as automated web scraping, capable of data extraction from systematically retrieving product information, job listings details, and entries from directories.

It does not require any copy and search data, helping it to expedite an ample amount of data collection with conviction and speed. Sounds interesting, right? Here in the blog I will give a complete walkthrough for what it actually is, why it matters, its work mechanism, real-life examples, and much more. Let’s go through the blog to discover more about it.

What is List Crawling?

List crawling is nothing but a process that assists in systematically extracting invaluable data from webpages. It gathers data from a list of urls available over the internet and fetches necessary information across the internet whenever or wherever required. Let’s think about a situation. Supposedly, you want to fetch multiple pieces of information from several websites that is at the same time a herculean task for you that demands a lot of effort and time.

List crawling, however, acts as a boon that simplifies the process & brings forth automation to do the task for you to give all the expected information you require. For example, you need information for contact details, the price of the product, and other info from websites. List crawling here is the blessing that does the rest for you.

Websites Best Suited for List Crawling-fact unfolded

Before going to fetch information from a website, it is essential to know whether list crawling works for the site, or you may come across intermittent trouble during the process. Let me clarify this for you. It is often seen that some websites are simple for fetching information, while others are not.

This is because of heavy JavaScript usage and also for the webpage layout that may pose unprecedented challenges for you to make genuine data extraction. Below, I share some examples of websites where it is simple and easier to handle.

👉 E-commerce sites

E-commerce sites are best suited for list crawling as the site showcases predictable pagination and uniform listing of products to extract huge amounts of data. Here HTML structure is clearly framed and also has pagination that shows page number or a button to predict the next predictable way to help steady list crawling.

👉 Career Sites

In career sites, information is well structured and kept in an organized format, that makes list crawling easier to find the information. The uniform layout, paginated listing for scrolling, and searchable field help to retrieve information consistently.

👉 Business Directories

The layout, entry template, and standardization are ideal for data extraction from business directories. Here, you can fetch information with negligible effort for the entry template with better scalability and performance.

👉 Review Platforms

The repetitive & predictable nature of the data schemas and well-structured layout make the review site worthwhile for seamless data extraction. The repeated components of the sites are making data extraction easier with a larger dataset present on the internet.

👉 Professional network

A professional network is also a useful asset to make seamless data extraction, as it has a repeatable pattern of data structure with an advanced layout to make data fetching easier by list crawling.

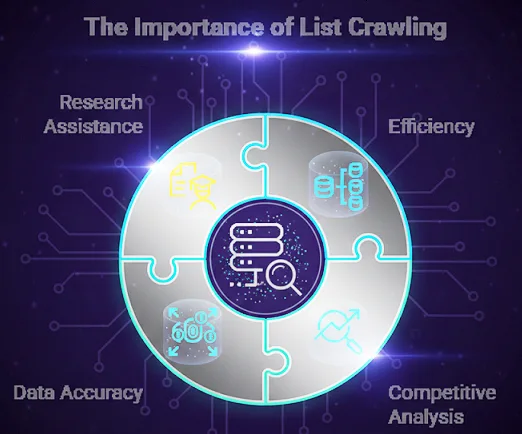

Why List Crawling Matters?

List crawling is significant and truly matters for multifaceted reasons listed below.

➡️Efficiency: Manual extraction of data from distinct sources can seem irritating, demanding both effort and time. Thanks to list crawling that simplifies the process to enable an individual to retrieve vast information in a quicker way with greater speed and conviction.

➡️Competitive analysis: List crawling assists businesses in gathering information about niche-specific competitors to gain a better sense of their pricing and product listing, which helps any business to outperform its targets by gathering the relevant information.

➡️Data Accuracy: The quintessential relevance that makes it an essential component in data analysis is its ability to automate the extraction of data with no manual error. This consistent quality helps to support decision-making and accurate data handling.

➡️Assist in researching: Researchers need huge amounts of information for their study to analyse and evaluate information for their academic pursuit. Here’s how list crawling is just a blessing to give accessibility to vast information at their fingertips.

How Does a List Crawl Work?

A list crawler follows a set of rules or a stepwise process for the extraction of data. Let’s have a look at the steps mentioned below with justification to make out how List Crawl Works.

✔️ List preparation for crawling

Before initiating the process of list crawling, you need to make a clear list preparation for URLs, which helps you retrieve the information you want. You can do this by a manual way to compile the list of URLs or take help from any tools to help you do things for you.

✔️ Crawling Tool selection

Now it’s time to bring automation to the crawling process. To do the thing, you require the help of a crawling tool that is available online. You can also add this tool to your browser as a browser extension to simplify the list crawling. Scrapy, ParseHub, and Octoparse are some worthy tools for list crawling. Let’s check the table to know about the interface, target audience, and much more.

| Feature | Scrapy | Octoparse | ParseHub |

| Primary Audience | Python Developers | Non-coders | Beginners |

| Best For | Large-scale, complex projects | Fast data mining without code | Scraping dynamic/interactive sites |

| Platform | Any OS with Python | Windows / macOS / Cloud | Windows / macOS / Linux / Cloud |

| Interface | Code-based | Point-and-Click (Visual) | Point-and-Click (Visual) |

| Learning Curve | Steep (Requires Python) | Easy to Moderate | Easy |

| Speed | Extremely fast & asynchronous | Moderate (Cloud-dependent) | Moderate |

✔️ Tool configuration

After finishing the tool selection process, it is now left to arrange the data through configuration settings to make things work for you. First, you need to separate which data you want to extract. It may be links, text, or any visual image. You also add any conditions or filters to capture the data you want to extract.

✔️ Running the Crawl

After the setting has been done, you can go for list crawling. The tool helps you in webpage navigation and assists you in extracting necessary data anytime, anywhere. The time needed entirely depends on data complexity or the number of pages you consider for data extraction.

✔️ Data analysis

After crawling is done, you are redirected to the data set for further analysis.

Real-Life Example: How List Crawling Transformed a Business

List crawling for a business can become the antidote to its own anecdote if you know how to leverage this judiciously. Let’s picture the situation by yourself. Suppose you have seen a small retail business in your neighborhood that struggles hard to keep pace with fierce competition.

They are indeed thinking of getting a clearer idea of the pricing strategy of their competitors to stay at the forefront. Now, how do they materialize it into reality? Leveraging this helps them to compile a list of competitors and their pricing. Web scraping, as a method of list crawling, would become a boon for data extraction. It would help them disarm their competitors and stay ahead in the market through the list-crawling process.

In terms of SEO, list crawling also serves additional benefits, strengthening the local ranking of a website via Keyword Research and making interesting content to boost SERP visibility. It would also help to secure backlinks from high authority sites that drive better rankings for their business website.

Ethics in List Crawlings

List crawling must be executed judiciously to avoid any ethical & legal repercussions, as seen below in the tabular form.

| Rule | Goal | The Action that safeguards ethical & legal repercussions |

| Robots.txt | Compliance | Follow the rules of disallow in the root directory. |

| Rate Limiting | Server Health | Add delays between requests to prevent crashes/bans. |

| Usage Rights | Legal Safety | Verify permissions before using data commercially. |

Wrapping up

List crawling is an invaluable tool for businesses that plays a significant role to lessen effort and time while helping to make better decisions. It is amicable to extract complex data from websites easily, and also functions as a breakthrough that transforms a business’s performance. I think you get a clear understanding, but still end up with some queries? Comment as I am always answerable to meet what you are yearning for.